A Surprise In Obama's Poll Numbers

[Updated, see below for additional information from Mark Blumenthal of Pollster.com.]

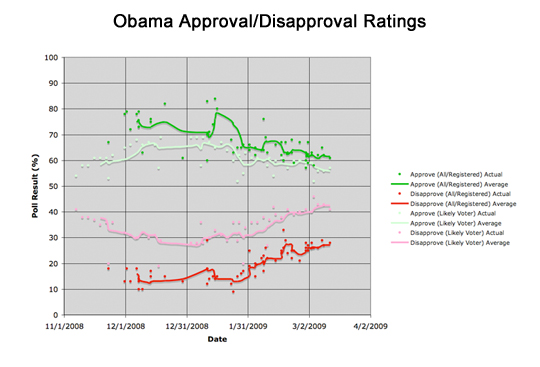

It really is a bit early to focus on President Obama's approval ratings in the polls, I know. But, rather than looking at the overall picture of how he's doing, I have been noticing something interesting which I don't believe others have picked up on -- Obama's numbers dramatically improve depending on the sample used by the pollsters. When "likely voters" (LV) are polled, the numbers they give are different from when either "registered voters" (RV) or "all adults" (A) are polled. Obama's LV approval rating is about five points lower than the RV/A numbers. The difference is more pronounced in the disapproval ratings, where LV numbers are fully ten points higher than RV/A numbers.

Is this significant? I have to admit, I don't know enough about polling to come to any conclusions I can be confident of. Perhaps I should send this column to Nate Silver over at FiveThirtyEight.com to see what he thinks. Like many people, I did a lot of poll watching over the course of the presidential election, and even wrote a series of articles with graphs to track what I thought was going on. But I am strictly an amateur at this stuff, I fully admit.

Which is why I truly don't know the explanation of this phenomenon. But I know enough to identify it, at the very least.

You can see what I'm talking about most clearly by looking at Obama's tracking graph over at Pollster.com. The graph is interactive, so you can roll your mouse over each dot in the chart to see which poll it came from. Spend fifteen or twenty seconds with this graph, and you'll see the same effect I'm talking about. There is an average line for both approval and disapproval. Check the dots (data points from individual polls) both above and below the "approval" line. Almost all the points above are listed as either "n=A" or "n=RV," and almost all the points below the line are listed as "n=LV."

Now look at the "disapproval" line and dots. The effect is easier to see here. Of all the dots above the line, only three are not LV -- two A and one RV. Of the dots under the line, only three are LV, the rest are A or RV. And the difference between their answers has stayed at a pretty steady ten points since the post-election polling started.

Meaning we really need a better graph to see what is going on. Due to my rather limited experience creating charts (which begins and ends with a few things I know how to do in Excel), I turned once again to my favorite charting blogger, Sam Minter of abulsme.com. Sam helped with the charts I used in my "Electoral Math" series tracking the Electoral College numbers throughout last year, and graciously put together the chart I needed to clearly show this effect:

[Click on the charts to see a larger image.]

This shows quite obviously that the dark red and dark green lines (which track RV/A) show a clear divergence from the light green and pink lines (which track LV). This divergence, as I said, is consistently (roughly) five points for the approval rating, and a bigger gap of ten points on the disapproval rating.

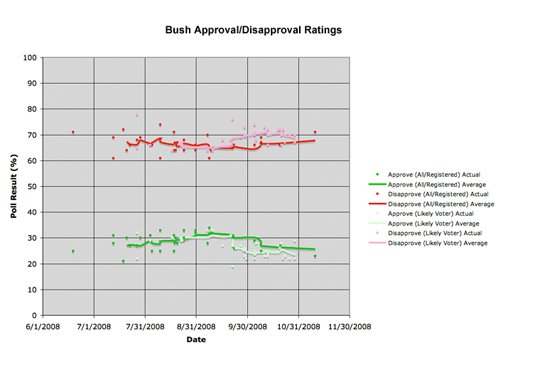

Minter then decided to put together another chart, to see if this was just a normal effect of polling. He gathered numbers for the most recent few months worth of polling on President Bush (from 7/08 to 11/08), to see if the effect is a normal thing or not. Here is his result (you'll notice the "approval" is now on the bottom, and the "disapproval" is now on the top, due to Bush's generally low poll numbers at this point).

[Click on the charts to see a larger image.]

As you can see, Bush's average lines do not really diverge much, and actually cross here and there. There is not much difference (certainly not as high as ten percent, or even five percent) between the lines.

So what does this all mean? Well, this is the point where we jump from analyzing data to wild speculation. You have been warned. We are now entering the realm of opinion, not fact.

Looking at Pollster.com's breakdown of Democratic approval ratings, Republican approval ratings, and Independent approval ratings doesn't shed much light on what is going on either. The polling samples for these sub-groups are a lot smaller (meaning a lot higher error margins), and there isn't enough data to really show any more than general trends among the three groups. It certainly looks like the disconnect has nothing to do with partisanship, but again, the samples are too small and there don't appear to be enough of them to draw any solid conclusions on this issue.

One thing to consider when looking at all the charts and data is how pollsters pigeonhole each group. If they're polling all adults, then all they have to ask is: "Are you old enough to vote?" or maybe: "Are you eligible to vote?" Likewise, asking: "Are you a registered voter?" is enough to figure that one out as well. But what, exactly, is a "likely voter"? Some polling organizations just ask you: "How likely is it that you'll vote in the next election?" with a range of vague answers such as "very likely" or "not so likely." And then some pollsters ask "Did you vote in the last election?" or even "Did you vote in the last two elections?" -- you'll notice that the second one is a tougher bar to hit, since it means you'd have had to have voted in at least one "off-year" (non-presidential) election.

But however you define "likely voters," they are always a subset of the universe of "registered voters" (which, in itself is a subset of "all adults"). And although different polling organizations have different ways of determining the likelihood of a poll respondent actually voting, the numbers are pretty consistent. No matter which pollster is asking which questions, there still seems to be a pretty solid gap (five points on approval, ten points on disapproval) between all the LV polls and all the RV/A polls.

It's gotten to the point where I can just hear the poll numbers and know what their sample was. If the approval is in the high 50s and the disapproval is around 40, the poll used likely voters. If the approval is in the low 60s (or above) and the disapproval is around 30, it is either registered voters or adults. That's as of this writing, of course -- Obama's poll numbers will change over time.

So, having discounted partisanship and polling methodology, what is causing this difference? Again, causality is always very hard (if not impossible) to prove beyond a reasonable doubt, even for statisticians, so please remember that I'm only rationally guessing here.

Sam Minter, when he sent me the charts, pointed something out which I hadn't taken into consideration -- that there is also a difference in the number of people who don't answer either "approve" or "disapprove" when asked the question:

My gut feel here is that these differences are actually somewhat tied to how "undecideds" or "non-responders" are taken into account. If you take my "average" lines for approve and disapprove and sum them at each point, for All/Registered (RV/A) you will see that the sum varies from 79.2% to 92.4% with an average of 87.5%. Meanwhile, for likely voter the sum varies from 88.6% to 99.0% with an average of 95.1%.

This means that the RV/A group has a much higher undecided rate... which could either be a real effect or just that the "likely voter" polls push harder for an answer. It actually makes sense, though, that people who are less likely to vote are more undecided, so let's assume it is a real effect.

What would more undecided people result in? Hmmm... well, at first you'd think that it would lower BOTH the approve and disapprove numbers. But what we are seeing here is lower disapprove, but HIGHER approve. So what does this get you? Maybe there is a real effect here... something along the lines of the less-likely voters being more willing to give the benefit of the doubt and give an "Approve" rating, and less likely to take a leap and say "Disapprove."

He also pointed out later that the Bush numbers were taken from the very end of his term, and by that time most people had figured out their opinion of him, negative or positive, so there were less undecideds in general. Which makes a lot of sense. Perhaps this is some sort of "honeymoon effect" for Obama, while the public figures out how it feels about him. I would need data from the beginning of a few other presidential terms to check this, but did not have time to research it to this level of depth.

And maybe the pollsters are creating the phenomenon to some extent. If, as Minter suggests, they are pushing likely voters harder to answer either "approve" or "disapprove," and are more willing to accept "undecided" from a non-likely voter, then that would indeed skew the data a bit.

I have my own hypothesis, though, and I leave it for a real statistician to look into further to prove me right or wrong. Bear with me here for some math to set it up. One thing we don't know from the chart data is the relative size of the "likely voter" group when compared to either of the other two. Remember, the LV proportion of the population will always be smaller than the RV or A population. Meaning that in a poll of RV or A, some percentage of the respondents would have been LV (as well as RV or A). Some would not -- registered voters or adults who didn't qualify for "likely voter" status. Call the subsets LV and non-LV. But what is the ratio between the two? This is an important question to be able to draw any solid conclusions.

For example, say they were even. Posit (for the sake of argument) that half of people polled in a RV or A poll are LV, and half are non-LV. We already know what a poll of LV looks like -- 40 percent disapproval. So, to get to the final RV/A disapproval number of 30 percent, all the non-LV respondents would have to have answered at a 20 percent rate (because, since the samples are half-and-half, they'd have to be averaged to come up with 30 percent).

Still following this? Without the math terms, what it means is that the non-likely voters approve of Obama at a higher rate and disapprove at a lower rate than the graph even shows. To know exactly what the numbers are for the non-LV approval and disapproval, we would need to know the exact ratio between them and the LV sample. But no matter what this ratio is, the non-LV people, in order to move the final percentages, approve of Obama a lot more strongly than the likely voter segment of the public. Or, to put it even more simply, non-likely voters love Obama a lot more than these polls can show. Meaning the difference between the two groups is even more pronounced than you see on the charts.

You can discount this by saying that non-likely voters are also much more politically apathetic, and hence draw the conclusion that they're not paying as much attention to politics now. Or, as Minter did, you can say that since they are less informed and politically apathetic, they are much more likely to give Obama the benefit of the doubt at this point -- meaning the effect should decrease steadily over time, as people do make up their minds.

But I think there's a demographic trend here which has not been identified. I think there are a lot of people who voted for Barack Obama who either hadn't voted before, or hadn't voted in a long time. Both young voters and older previously-disillusioned voters. Obama drew more people into the voting process, in other words. This was confirmed by endless polling during the campaign and isn't even really debatable now -- more people were more interested in voting in 2008 than in the recent past.

What I think is that the people in the "non-likely voter" group are strong supporters of President Obama. And that they are still supporting him in large numbers now. But because they've only voted in one recent election, or because they answer some flavor of "I don't know" when asked if they plan on voting in the 2010 midterm congressional elections, that they are dropping off the radar somewhat by being pegged as "non-likely voters" by the professional pollsters.

As I said, I don't know whether I'm right or wrong on this. But I think that there is a segment of the American public which is big enough to steadily sway the approval polling by plus five points, and the disapproval numbers by minus ten points. I don't know the size of this segment, either in real numbers or in comparison to the "likely voter" demographic. But the smaller in size this group is (when compared to "likely voters"), by definition the stronger they have to be supporting Obama (in order to have this effect on the total polling numbers). I think this segment represents the first-time voters that Obama drew to the voting booth last November. And I further think that (because this effect is flying below the radar of the media and possibly even the pollsters themselves) it represents an unnoticed source of political capital for President Obama.

But then, I could be wrong. Maybe it's time to write Nate Silver that email....

[Update: Mark Blumenthal of Pollster.com wrote me a nice note with a few links I'd like to share with everyone. He wrote an op-ed article in the New York Times last February (in the midst of the primary campaign, and when the New Hampshire results were still fresh) in which he complains of the problem:

Despite 22 years of experience as a Democratic pollster, I can only speculate about what might be going wrong.

Why? Because so many pollsters fail to disclose basic facts about their methods. Very few, for instance, describe how they determine likely voters. Did they select voters based on their self-reported history of voting, their knowledge of voting procedures, their professed intent to vote or interest in the campaign? Did they use actual voting history gleaned from official lists of registered voters?

Fewer still report the percentage of eligible adults that their samples of likely voters are supposed to represent. This is a crucial statistic, given the relatively low percentage of eligible adults who participate in party primaries. (In California, for example, turnout surged in 2008 but still amounted to about 30 percent of the state’s eligible adults.)

And, more recently, Blumenthal writes of the problem of determining likely voters, and how Rasmussen's numbers are largely to blame for the effect (towards the end of the article):

...the fact that Rasmussen screens for "likely voters" and perhaps other, harder to detect differences stemming from their use of an automated methodology -- yield lower Obama approval scores than other pollsters. Obama's average approval percentage has been roughly 3 points lower on Rasmussen than on other polls, and his disapproval score has been roughly 14 points higher. You can easily see the difference in the disapproval scores in our chart below. There are two bands of red disapproval dots -- click on any of the higher numbers and you will see that virtually all are Rasmussen polls.

Thanks to Mark for sharing these, which help to explain the LV gap a little better.]

Cross-posted at The Huffington Post

-- Chris Weigant

Chris,

It looks to me, as an avid fan of your polls and charts, like you may be on to something big here. I hope you're right and that this will all translate into a big boost in political capital for Obama...or else, Biden may have to revive his famous, or...infamous 'Augean Stables' rant somewhere again...maybe even go national with it! Do you think the media would cover it...who knows...they certainly wouldn't understand it, that's for sure. But, I digress...

Anyway, I'll bet that, between a revival of the 'Augean Stables' rant, or two, and a few more town hall meetings like the one Obama held in your state today, those polls and charts are going to be looking pretty sweet from here on in.

"(A) demographic trend here which has not been identified. I think there are a lot of people who voted for Barack Obama who either hadn't voted before, or hadn't voted in a long time. Both young voters and older previously-disillusioned voters."

This is what I was thinking to myself in the second paragraph of your post. Obama made voting "cool" for a vast number of American youth, and "cool" again for millions of Americans who'd dropped out of politics altogether.

Here's my hope: that the GOP goes on fooling itself,seeing what it wants to see. That Obama can get those millions of Americans back to the polls in 2010 and 2012 to really, really crush the GOP. Because until Republicans get hammered for running on their "values," the crazies will still be in charge of the party.

Once again, I am amazed at the "us and them" mentality.

Regardless of that, the simple fact is, it's all down hill for the Obama administration from here on out.

Most here know my opinion of polls. For those who don't allow me to elucidate...

Polls are useless. They are only good to show the biases of the poll takers and have no real bearing in reality. Anyone who uses a poll solely to make a point is intellectually dishonest or just plain lazy.

As an aside, CW's use of polls was to make a point about polls. In that regard, it's perfectly acceptable and the previous statement does not apply. :D

But seriously, I find this faith in polls just another example of political bigotry. The polls are sacrosanct and "dead on ballz accurate" (it's an industry term) when they say things that people WANT to hear.. But when the polls point to something that said persons don't like?? Then they are nothing, worthless and biased...

No one should use a poll as ANY part of an argument unless A> THEY themselves went out and talked to the thousands of people and B> completely disclose their exact reasoning behind the people that were chosen to be polled and they questions/answers that these polled people were asked.

Without such knowledge **ALL** polls are "nothing, worthless and biased" regardless of whether they say things I want to hear or not.

Michale.....

Elizabeth -

Augean stables rant? I hadn't heard of that one. I assume it has something to do with metaphorically shoveling horse manure? Do tell!

Michale -

Ah, c'mon! Spoilsport! I haven't talked about polls since the election, and even held off when people were frothing at the mouth over the first two weeks of polling in the Obama administration.

I just like making charts, if the actual truth be known. Charts are cool!

Heh.

-CW

To everyone -

Sam Minter has posted a much more full account of his thoughts. What I excerpted above was from emails he had sent me, and I really didn't have his permission to post them at the time of my deadline, so I just used a bit of them (and hoped he wouldn't mind). I later confirmed his approval, and his posting has pretty much the entire email thread he sent me. He graciously left out my emails, which were full of stupid questions on my part (like: is it even possible to make this chart??).

But for anyone who made it to the end of this article, I strongly encourage checking Minter's post out.

-CW

Chris,

You have so heard of the Augean Stables rant by then VP-elect Biden, and so has everyone else.

Unfortunately, this rant is more commonly known as the 'Obama will face a crisis within his first six months as President - mark my words' rant, thanks to all of our friends in the media/blogosphere/punditocracy.

This was the October 2008 speech in which Biden addressed a select gathering of Obama's biggest donors and supporters who are leaders in their own right with influence in their respective communities.

The message of the night was one based on loyalty and support. Biden was warning those community leaders that the next President is going to inherit an extremely difficult set of challenges - a monumental mess on a scale of magnitude comparable to the Augean Stables, no less! (A classical reference wholly lost on the media, I might add) And, tough decisions are going to have to be made - decisions that Biden said may not appear, at first glance, to be either the right decisions or very popular. But, he told the audience, those decisions would be sound decisions. In fact, he warned Obama's supporters that popular decisions would probably not be sound ones. Biden was, in essence, urging and pleading with the audience to not only stand with the President during such times but to be vocal and public about their support for him when the iron hits the fire.

Senator and VP-elect Biden's message was both profound and powerful and, in the final analysis, a remarkable validation of Barack Obama and of the exceptional President that he would be.

In other words, Biden has your back, Barack! Sadly, based on his own comments following the press coverage of these remarks, the President-elect appeared not to know this. I trust that President Obama does understand this now and knows that VP Biden is his wisest and most loyal defender and protector...next to the First Lady, herself!

It was obvious, from the barrage of their misguided reporting, that Biden's critics throughout the media/blogosphere/punditocracy had not even bothered to listen to his full remarks. But, truth be known, they wouldn't have understood them anyway.